In the rapidly advancing digital epoch we inhabit, Artificial Intelligence (AI) stands out as a monumental breakthrough. As a linchpin of modern innovation, AI offers capabilities that were once considered the stuff of science fiction. Its applications, ranging from predictive analytics that forecast market trends to the development of autonomous vehicles that can navigate complex terrains without human intervention, have found a place in nearly every industry we can think of.

But like all technological marvels, AI is not without its set of challenges. The integration and reliance on AI systems have unveiled a plethora of vulnerabilities. These vulnerabilities, if left unchecked, could have dire consequences for individuals, businesses, and even entire societies. At the heart of these challenges is the overarching concern of AI cybersecurity.

The increasing incorporation of AI in our daily lives and businesses means that there is an exponential growth in the data these systems handle. This data, which often includes sensitive and personal information, becomes a prime target for cybercriminals. Without proper AI cybersecurity measures in place, these systems can become easy prey for hackers aiming to misuse the information, manipulate AI decision-making, or compromise the integrity of the AI system altogether.

Furthermoe, as AI systems learn and adapt from vast datasets, even a slight manipulation by malicious actors can skew their learning, leading to inaccurate, biased, or even harmful decisions. This underscores the importance of robust AI cybersecurity protocols that not only protect the data but also ensure the AI operates as intended.

In conclusion, while AI continues to shape the contours of our digital age, weaving itself seamlessly into the fabric of our daily operations, it brings forth the urgent need for enhanced AI cybersecurity. This will be essential in ensuring that as we progress and evolve with AI, we do so safely, securely, and responsibly.

AI Cybersecurity and the Vital Role of Governance, Risk, and Compliance (GRC)

In the realm of artificial intelligence (AI), the magnitude and complexity of data involved have led to a heightened risk profile. As AI systems take on more responsibilities and process more data, the importance of AI cybersecurity cannot be stressed enough. At the crossroads of this challenge stands the Governance, Risk, and Compliance (GRC) framework – a pivotal tool in ensuring AI operates securely and efficiently.

1. Establishing Structured Governance

At its core, AI cybersecurity hinges on well-defined governance. By implementing a GRC framework, organizations can lay down a consistent set of rules and protocols that all AI systems must adhere to. This uniformity in governance not only facilitates smoother oversight but also streamlines management. The clarity it brings ensures that there’s a standardized response to potential security threats, thus enhancing the organization’s ability to protect its AI assets.

2. Risk Management in the Age of AI

One of the standout features of AI is its ever-evolving nature. As AI continues to learn and grow, so do the associated risks. Within the GRC ambit, risk management is a proactive approach to identifying, assessing, and mitigating these risks. AI cybersecurity mandates that risks aren’t merely addressed once but are continually monitored and re-assessed. This dynamic approach to risk management ensures that organizations remain a step ahead of potential threats.

3. Ensuring Compliance Amidst Changing Regulations

The world of AI is still, in many ways, the ‘Wild West’ when it comes to regulations. As authorities grapple with the rapid advancements in AI, regulations surrounding AI cybersecurity and data protection are in a state of flux. A robust GRC framework, however, ensures that AI implementations remain compliant with the latest guidelines. By doing so, it shields organizations from potential legal pitfalls and fosters trust among stakeholders.

4. Fortifying Security Through GRC

Beyond governance and compliance, at the heart of GRC lies a commitment to fortifying security. By integrating AI cybersecurity best practices, the GRC framework strengthens the defenses around AI systems and the vast pools of data they handle. This proactive security stance acts as a bulwark against external and internal threats, safeguarding the integrity of the AI system.

Tailoring GRC for AI’s Unique Landscape

While GRC provides a sturdy foundation, it’s essential to recognize that AI presents a unique set of challenges and risks. A one-size-fits-all approach will not suffice. Organizations need to tailor their GRC practices to cater to AI-specific vulnerabilities. This adaptability ensures that as AI cybersecurity threats evolve, the GRC framework remains relevant, resilient, and robust.

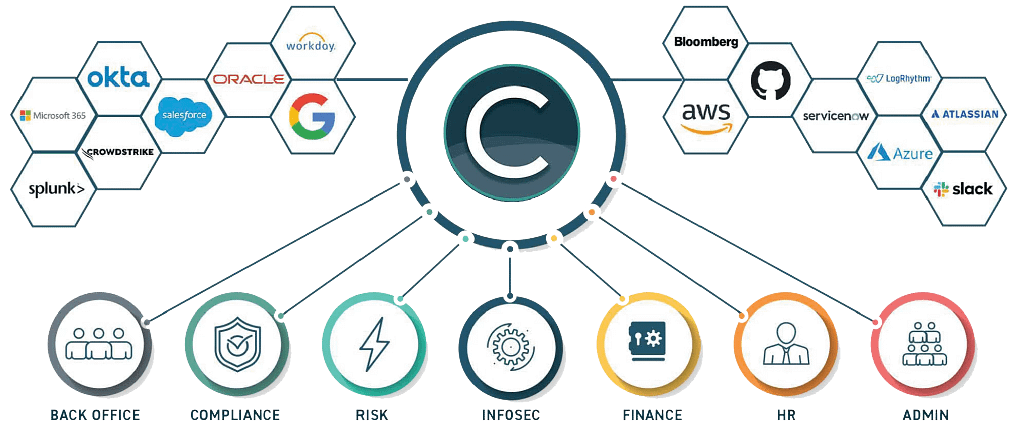

Understanding the pressing need for a comprehensive GRC solution, Compyl’s GRC platform emerges as the modern solution organizations can trust. Here’s why:

- No-Code Customizability: Integration is smooth, aligning with existing technological frameworks, ensuring no learning curve or overhaul is required.

- Breach Analysis: Real-time alerting upon detecting your email address or domain being compromised.

- User-Friendly Interface: With a drag & drop feature, customizing processes becomes a breeze, eliminating the need for technical expertise and saving time and money.

- Automation at Its Best: Offering 24/7 compliance monitoring, the platform streamlines workflows, minimizes human error, and simplifies assessments, ensuring that nothing slips through the cracks.

- Tailored for AI Challenges: Recognizing the unique challenges posed by AI, Compyl’s platform is designed to address and overcome them, offering peace of mind to organizations.

Free Security Assessment Today

As AI continues to play an integral role in our digital landscape, ensuring its security is paramount. A robust GRC framework, like that offered by Compyl’s GRC platform, provides the assurances needed to safeguard AI against potential cyber threats, ensuring a safer, more secure digital future. Reach out today and see if Compyl’s GRC solution can help you reach your goals.